WARNING: This feature is EXPERIMENTAL and still UNDER DEVELOPMENT! PS C:\Windows\System32> Export-DatabricksEnvironment -LocalPath ‘C:\Databricks\Export’ -CleanLocalPath PS C:\Windows\System32> Set-DatabricksEnvironment -AccessToken -ApiRootUrl “ MY Powershell version – 7.1.0, running on Windows 10 Enterprise

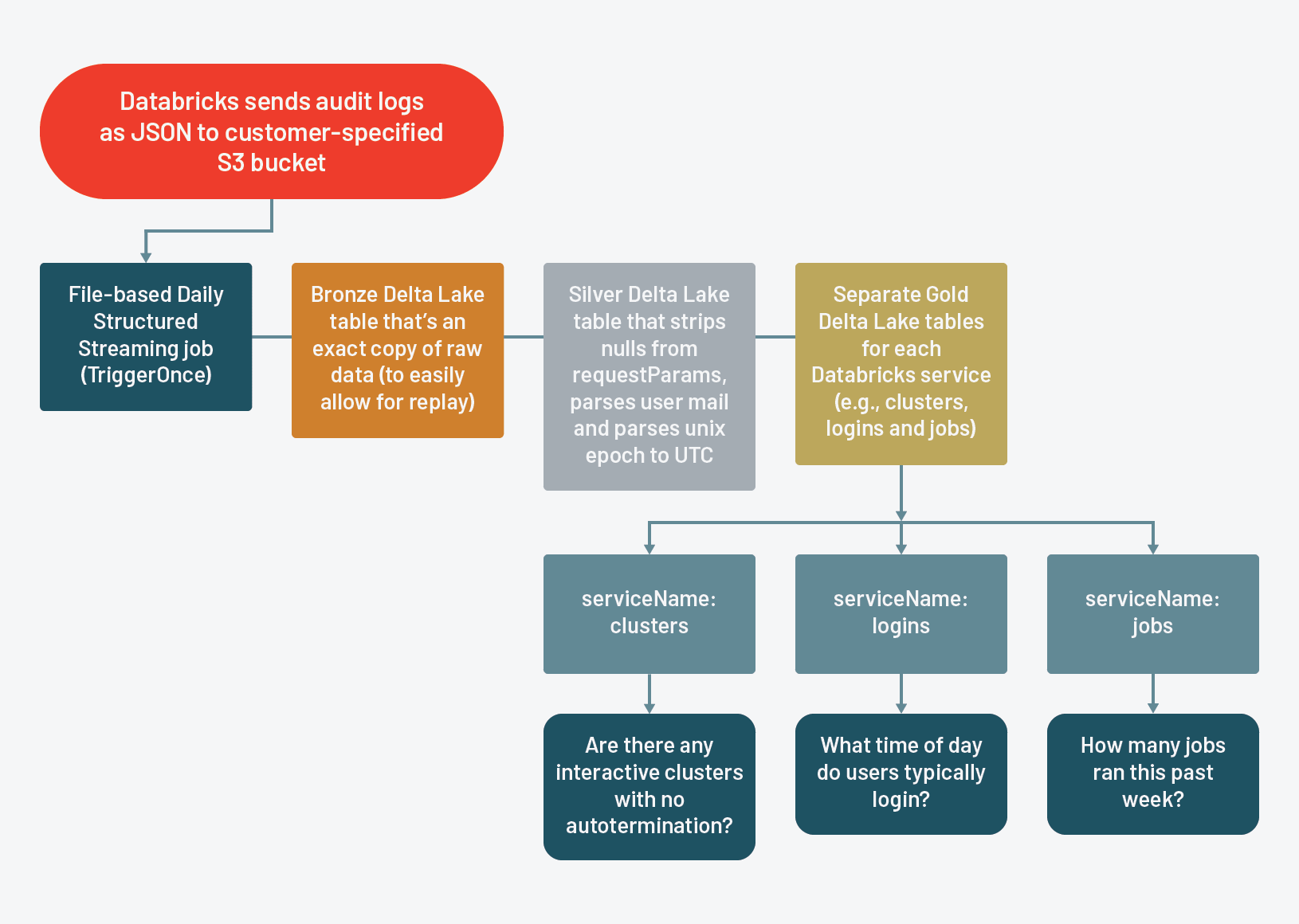

I have Owner role on my databricks workspace that I want to import and export, and while I try to export the whole workspace, I get the following errors DBC file by default:Ī very simple sample code doing and export and an import into a different environment could look like this: The actual output of the export looks like this and of course you can also modify it manually to your needs – all files are in JSON except for the notebooks which are exported as. They can be further parameterized to only import/export certain artifacts and how to deal with updates to already existing items. So I basically extended the module and added new Import and Export functions which automatically process all the different content types: This was fantastic news for me as I knew I could use my existing PowerShell module DatabricksPS to do all the stuff without having to re-invent the wheel again. Workspace items (notebooks and folders)įor all of them an appropriate REST API is provided by Databricks to manage and also exports and imports.Basically there are 5 types of content within a Databricks workspace: So I had a look what needs to be done for a manual export. I do not know what is/was the problem here but I did not have time to investigate but instead needed to come up with a proper solution in time. Resource move is not supported for resource types ‘Microsoft.Databricks/workspaces’. Unfortunately it turns out that moving an Azure Databricks Service (=workspace) is not supported: So I thought a simple Move of the Azure resource would be the easiest thing to do in this case. It recently had to migrate an existing Databricks workspace to a new Azure subscription causing as little interruption as possible and not loosing any valuable content. You can check the next post to get familiar with Databricks Notebook.The approach described in this blog post only uses the Databricks REST API and therefore should work with both, Azure Databricks and also Databricks on AWS! In this post, we have learned about the Workspace available in Databricks and how it organizes the notebooks, library, etc. Import: To upload any existing notebook from localĮxport: Export the notebook in Archive and HTML formatĬopy to link address: Get the absolute path with link If you right-click on the workspace, you will see multiple options likeĬreate: Notebook, Library, Folder, ExperimentĬlone: To copy an existing notebook with a diff name Users: It is an individual user’s work directory to use and create a notebook. Shared: It is basically a shared location across the team to keep all the notebooks and others stuffs. Default Databricks Workspaceīy default, there are two workspaces already available in Databricks – Shared and Users. MLExperiment: It is a collection of MLFlow for training a model. Library: It is a collection of code available for the notebook or job to use.įolder: It is a storage to keep all the notebooks for better organize. Notebook: It is a web-based interface document that keeps all commands, visualizations in a cell. It will help to get familiar with the Databricks platform.ĭatabricks workspace is a kind of organizer which keeps notebooks, library, folder, MLFlow experiment. In this post, we are going to understand the Databricks Workspace.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed